Fractal Response to Analyzed Sound

|

This project explores what music would look like as a fractal. The project is composed of two source files written in Python and C++ respectively. The Python code takes a song or sound as input in the .wav format, and then the sound is analyzed offline outputting a list of parameters to send as an input to the C++ source file, which creates a series of fractal images that respond to the sound. After all the images are created, they are sent into ffmpeg along with the sound file to create the final video.

|

Implementation

The file that reads and analyzes the sound input is written in Python. This code is responsible for outputting the analyzed sound data. Since there are 44,100 samples per second in a sound file and 26-30 frames per second in a video file, I decided to split up the second into 43 parts so that there were 43 frames per second. I found this to work best, particularly with detecting beats.

Python does all the analyzing: Root mean square (RMS), energy calculations, beat detection, low pass filter, band pass filter, and high pass filter.

Python does all the analyzing: Root mean square (RMS), energy calculations, beat detection, low pass filter, band pass filter, and high pass filter.

RMS

The RMS calculation is done 43 times for every second and since there are two channels, I add the value of the left and right channels, square the result and save them all in an array. I then find the mean in the array, and take the square root for that section of the second.

Zero Crossings

I implemented zero crossings to see what it would look like, but the visual results were not interesting.

Beat Detection

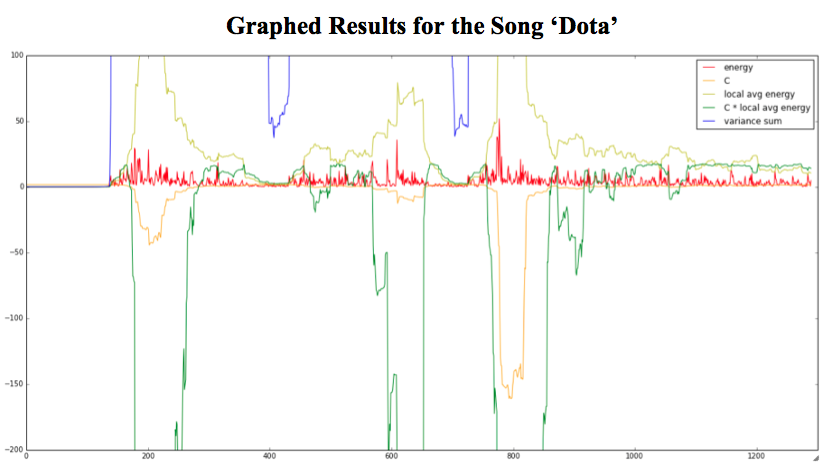

I tried to implement the beat detection algorithms explained in this article. However, these methods did not produce the results I was expecting. The article does mention that it works best on rap and techno, but visually, it was not what I was looking for even for techno. I think there was an issue with the way the values were going off the charts, but I could not find a difference in my implementation from the article.

I tried tweaking the constant, but the results were still not promising so I decided to try a different approach. I noticed that the energy, which is the line in red, seemed more correct than any of the other lines.

Instead, I implemented a beat detection that used the energy and put a threshold on its values to detect a beat as suggested. This method worked really well, it’s not perfect, but it is a lot more accurate than the first two implementations from the Game Dev article. This implementation is implemented the way that RMS was with 43 chunks of approximately 1025 samples per second.

Instead, I implemented a beat detection that used the energy and put a threshold on its values to detect a beat as suggested. This method worked really well, it’s not perfect, but it is a lot more accurate than the first two implementations from the Game Dev article. This implementation is implemented the way that RMS was with 43 chunks of approximately 1025 samples per second.

Filters: Low Pass, Band Pass, High Pass

|

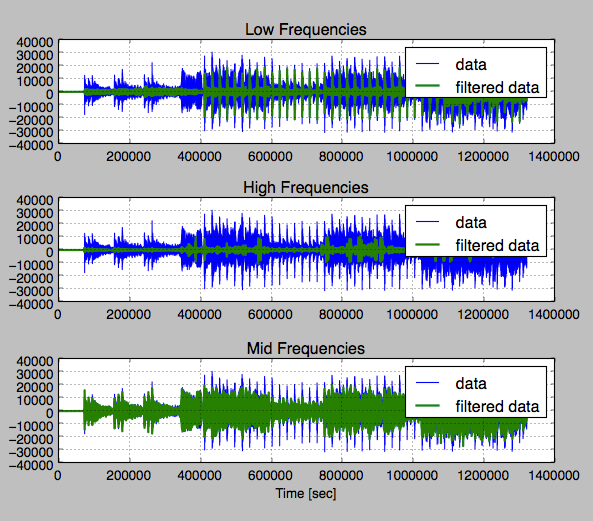

The frequency was analyzed through a set of filters. The filters were applied on the entire array of samples without separating it into chunks. Once the calculation was done, the array was split into 43 chunks per second and the highest value found (absolute value of each result) was the representing value.

The largest value was chosen for better visual results. At first, the results from all three filters were scaled to fit in the range of 0-255 for color, 0 being the smallest value found in that chunk and 255 being the largest. However, this resulted in numbers that were too close to each other making the image nearly gray most of the time. Instead of scaling the number based on each individual filter, I scaled the numbers according to the lowest and highest (absolute) value found in any of the three filters. This method provided better colors and visual results. |

The Low Pass filter’s cutoff was at 250Hz, the High Pass filter’s cutoff was at 6000Hz, and the Band Pass (“middle”) filter had all the frequencies in between.

In Python, the filter in code is the “butter” filter. The results of the three filters are shown below.

In Python, the filter in code is the “butter” filter. The results of the three filters are shown below.

Filtered Frequencies for Taylor Swift's 'Red'

Energy Calculations

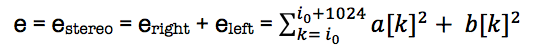

I implemented energy the way the game dev website described using the R1 formula:

I used a threshold of 6.5 to detect the beats; I found this to work best.

C++

I used C++ to read the output of the python file. The C++ code used the parameters outputted by the Python file to generate a series of fractal images with those parameters. There were 43 images for every second.

Mapping Details

RMS – Zooms in and out of the fractal

Energy – Affected the Zn of the fractal which I call the ‘a’ and ‘t’ parameters.

Beat Detection – In “Red” and “Any Man of Mine”, if there was a beat, the fractal would change the color of the fractal to a custom red and yellow color scheme. In the song “Red” the fractal would get one more root in addition to changing the color scheme. In “Gangnam Style”, the color would change to the rainbow custom color scheme every time there was a beat.

Low Pass – Mapped to the color blue Band Pass – Mapped to the color red High Pass – Mapped to the color green

Energy – Affected the Zn of the fractal which I call the ‘a’ and ‘t’ parameters.

Beat Detection – In “Red” and “Any Man of Mine”, if there was a beat, the fractal would change the color of the fractal to a custom red and yellow color scheme. In the song “Red” the fractal would get one more root in addition to changing the color scheme. In “Gangnam Style”, the color would change to the rainbow custom color scheme every time there was a beat.

Low Pass – Mapped to the color blue Band Pass – Mapped to the color red High Pass – Mapped to the color green

Fractal Equations

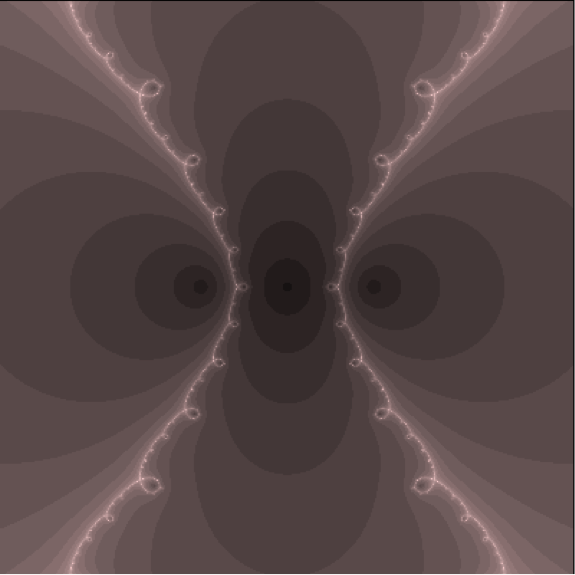

Red used 3 roots located at 1.5, 0, and -1.5 and it’s respective derivative.

Gangnam Style used cosh(x) – 0.2

Any Man of Mine used tan2(x) – (a + (1.0 – t) i)

I decided to use ‘a’ and ‘t’ for an extra parameter in Any Man of Mine for more interesting results.

Gangnam Style used cosh(x) – 0.2

Any Man of Mine used tan2(x) – (a + (1.0 – t) i)

I decided to use ‘a’ and ‘t’ for an extra parameter in Any Man of Mine for more interesting results.

Video Results

Here are the results for three different types of music; the quality is much better on my computer.